AI Innovation: Orca and Progressive Learning

A refreshed look at Orca, explanation tuning, and why smaller language models need richer supervision, stronger data, and better evaluation.

Orca was interesting because it challenged a tempting shortcut in AI. If a small model copies a large model’s answers, maybe it can inherit the large model’s skill.

The Orca paper argued that this is not enough. A small model can copy style without learning the reasoning that made the answer useful. The difference matters. Copied style can seem strong at first, then fail under a harder test.

Microsoft Research trained a 13-billion-parameter model on richer signals from large foundation models. Those signals included explanation traces, step-by-step reasoning, and a mix of task formats. The result was a test of whether better supervision could make a smaller model more capable.

This post keeps the original Orca figures while tightening the text and adding clearer caveats about evaluation.

The Problem Orca Was Trying To Solve

Instruction-tuned models often learn from prompt-response pairs. That works well for format, tone, and basic task following. It can still leave a gap between sounding right and reasoning well.

The Orca paper identified three related problems:

- Shallow imitation signals from short model outputs.

- Narrow or limited training data.

- Evaluation that can overstate skill when a model mostly learns style.

That third point has aged especially well. As models improve, tests have to get more careful about what they measure. A model that writes a convincing explanation has not always solved the problem in a reliable way.

Progressive Learning

Orca’s answer was progressive learning from complex explanation traces. The idea was to show the smaller model a wide range of tasks and richer teacher outputs. Then test whether that signal improved its reasoning.

flowchart LR

A[Diverse tasks] --> B[Large model teacher]

B --> C[Explanation traces]

C --> D[Filtered training data]

D --> E[Smaller student model]

E --> F[Reasoning and knowledge evaluation]

This is a useful mental model for AI training more broadly. The target is not just “what answer did the teacher give?” It is “what made that answer useful?”

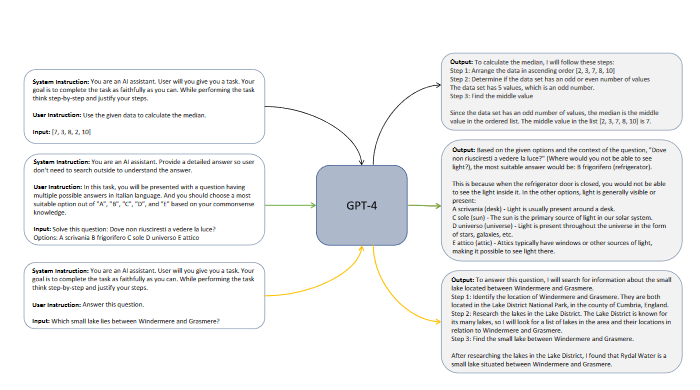

Original Orca Training Overview

The original post figure shows the high-level Orca training idea: richer teacher answers become the learning signal for a smaller model.

The original post figure shows the high-level Orca training idea: richer teacher answers become the learning signal for a smaller model.

The central insight is that the model sees more than labels. It sees explanations, the steps in between, and a mix of tasks. That makes the data harder to build, but often worth more in the end.

For engineers, the analogy is familiar. A code review that only says “wrong” teaches less than one that explains the failure, the expected behavior, and the tradeoff at play.

Explanation Tuning

Explanation tuning is the core technique behind Orca’s appeal. Instead of training a student model on final answers alone, the data also includes fuller reasons from stronger models. The student sees how the answer was reached.

The value of explanation tuning is the extra structure it gives the student model while it trains.

The value of explanation tuning is the extra structure it gives the student model while it trains.

The promise is clear. A smaller model can become more useful when it learns from examples that show how a stronger model breaks a problem into parts.

The caveat is equally important. Explanations are data, and data can be wrong, biased, incomplete, or overfit to a benchmark. A model can also learn the shape of an explanation without the reasoning behind it. Strong testing matters as much as strong training.

Evaluation: The Part That Keeps Getting More Important

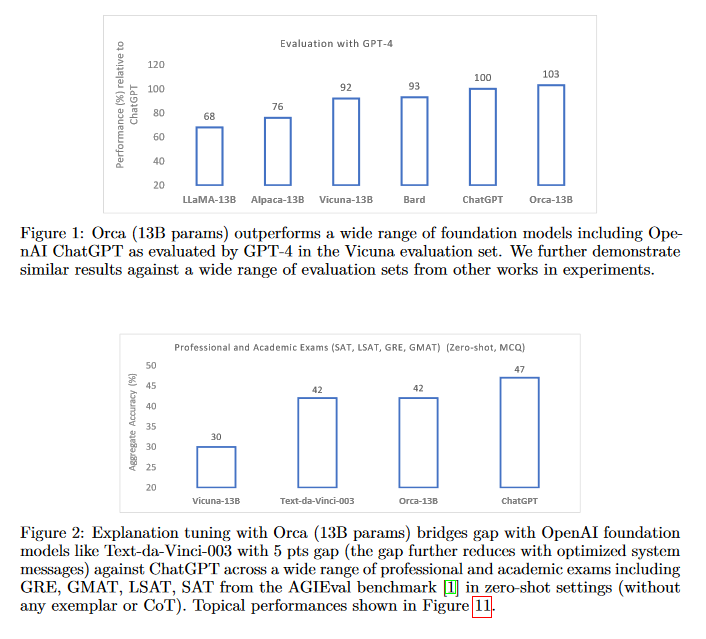

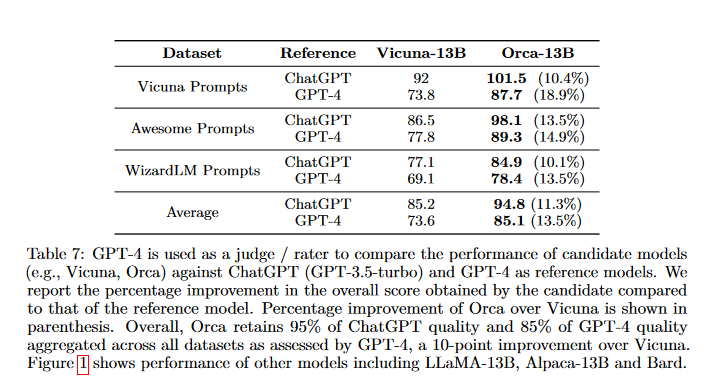

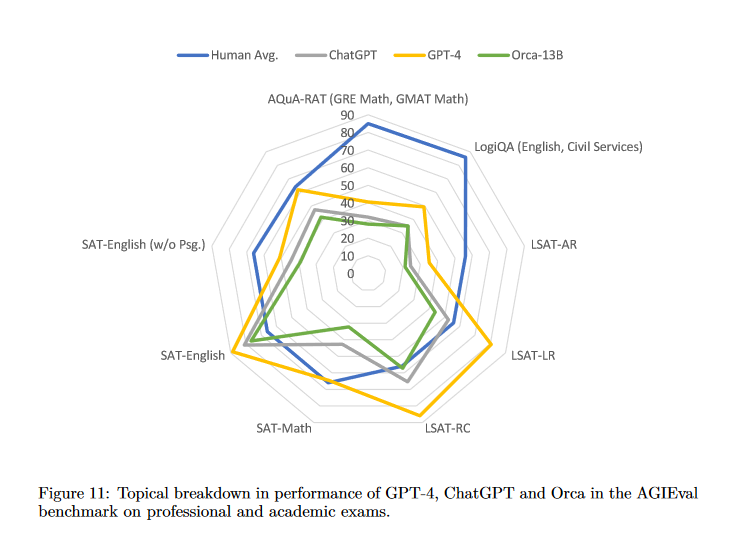

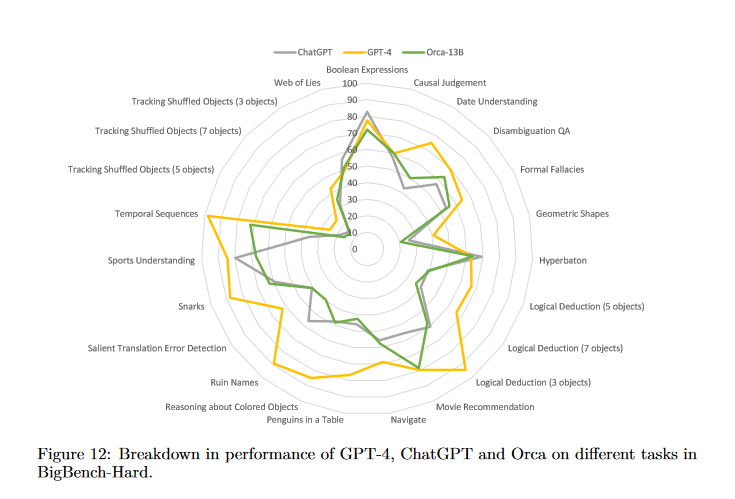

Orca reported strong results on several reasoning and knowledge benchmarks, measured against other instruction-tuned models of similar size. The paper showed gains on Big-Bench Hard and AGIEval, plus comparisons to ChatGPT and GPT-4.

Benchmark gains are useful, but they read as evidence, not as proof of general reasoning.

Benchmark gains are useful, but they read as evidence, not as proof of general reasoning.

The original figures help because they show what the authors measured, not just the headline claim.

The original figures help because they show what the authors measured, not just the headline claim.

The broader lesson is that model skill should be tested from many angles.

The broader lesson is that model skill should be tested from many angles.

Here Orca connects to a lasting problem in AI: tests can lag behind skill. A benchmark that is too narrow, public, leaked, or easy to game rewards the look of progress over the real thing.

That concern shows up again in When Coding Benchmarks Stop Measuring Progress. Benchmarks are useful. The open question is whether they stay reliable as models and incentives change.

What Still Feels Relevant

Several Orca ideas still hold up:

- Better supervision can matter as much as model size.

- A diverse task mix lowers the risk of narrow imitation.

- Teacher explanations can help pass on process, not just answers.

- Evaluation should test reasoning, safety, calibration, and robustness.

- Small models work best when their limits are clearly understood.

Smaller models can be cheaper, faster, easier to deploy, and easier to tune for one job. But they should not be treated as drop-in swaps for larger systems without testing in the target domain.

Caveats Worth Keeping in View

Reading Orca today, three caveats deserve close attention.

First, teacher-generated explanations are not always ground truth. They may hide mistakes, or carry reasoning that sounds fine but does not support the answer.

Second, benchmark scores can overstate real-world skill. A model can gain on a test and still fail in real work. Real work may need tools, memory, domain limits, or high-stakes review.

Third, training on powerful model outputs raises questions. Where did the data come from? How is it licensed? Can the results be reproduced? How much of the teacher’s behavior transfers?

Takeaway

Orca’s lasting contribution is a training philosophy rather than any single model checkpoint. To make smaller models do more than mimic style, they need richer learning signals and better tests.

That idea matters more as AI systems move from demos into software engineering, research, education, and business work. Model size is no longer the central question. What matters is what the model learned from, what was measured, and where it still fails today.

References

- Microsoft Research, “Orca: Progressive Learning from Complex Explanation Traces of GPT-4”, June 2023.

- Mukherjee et al., “Orca: Progressive Learning from Complex Explanation Traces of GPT-4”, arXiv:2306.02707, June 2023.